![]()

During our internship at Hinnerup Net A/S we got a three day challenge to try out the Oculus Rift SDK kit and see what we could come up with in such a short amount of time. Anything would be acceptable, as long as the Oculus Rift device was used and a working demo could be presented on it.

You would think something like: “What? Only three days?! With no initial knowledge of 3D programming and/or VR? With no experience with Oculus Rift? Forget it! It simply can’t be done!”. Well, as we will demonstrate, it’s far easier than you might expect to get up and running with the Oculus Rift device.

First thing we did was to head to the Oculus Rift homepage and download the Software Development Kit. Then we proceeded to plug in the Rift and perform a calibration of the unit. After putting the Oculus Rift device on and spinning blindly around we encountered a smaller issue. The software proclaimed that we weren’t spinning enough, after a few extra tries with the same errors, and getting mildly motion-sick we decided that calibration probably wasn’t important as the Rift could deliver a satisfactory picture, so we moved on.

We then proceeded to taking a look at how we could make our own “demo” for the Oculus Rift. From the Oculus Developer pages, we saw that it supported both Unity and the Unreal engine. Based on what we had heard about Unity and our already existing curiosity about it, we decided to go the Unity route and see what would happen. We downloaded the Rift SDK for unity, and accepted the offer for 1 month free trial of Unity pro. The SDK came with a Unity demo project to test out how the integration worked.

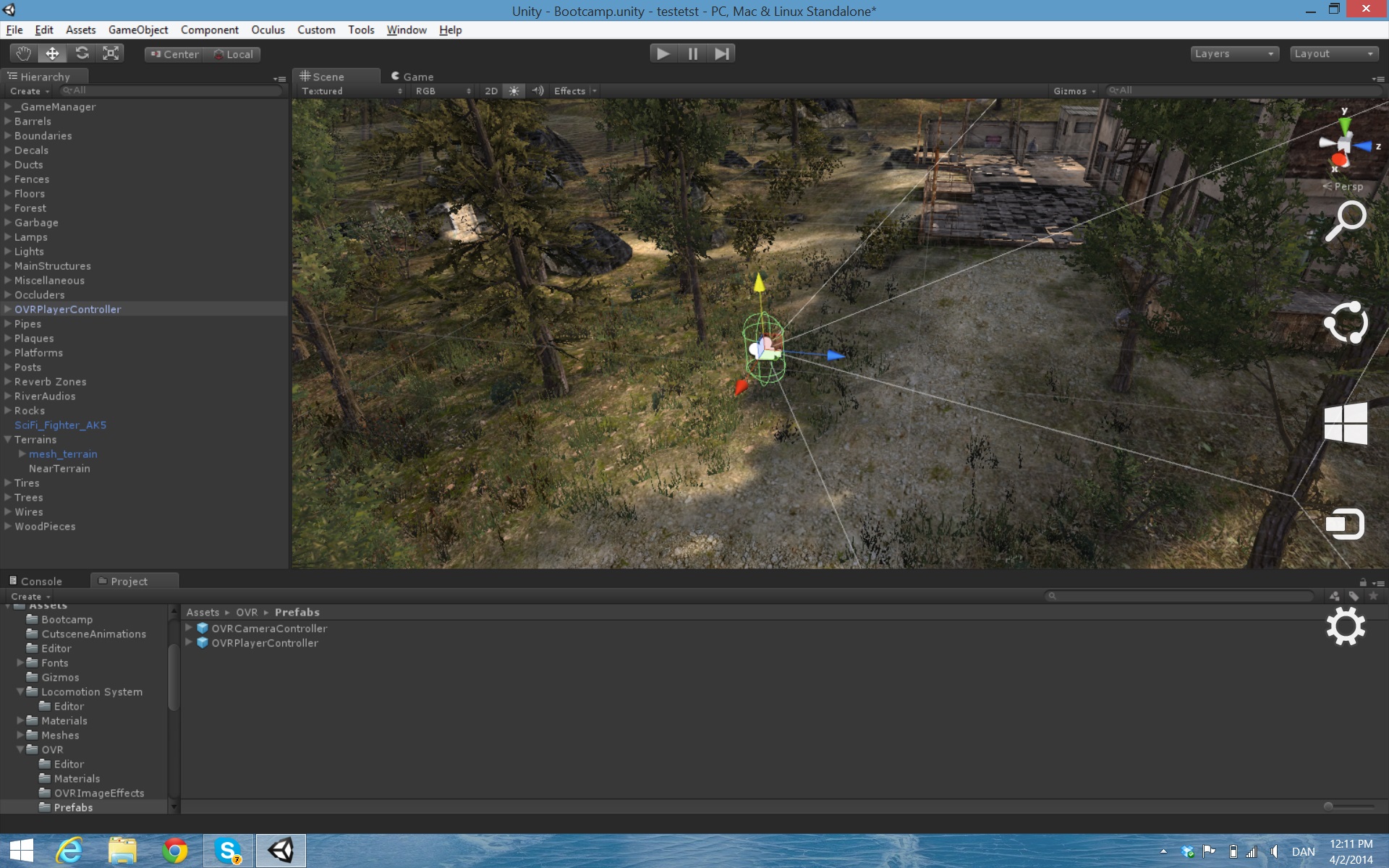

After just playing around for some time in the Unity SDK, we decided to try downloading a Unity project that did not have Rift support with the goal to add support for Oculus Rift to it. Using the supplied Unity package it was pretty straight forward to do this. All we had to do was to either replace the standard Unity camera with the supplied Rift camera, or replace the player controller with a Rift controller, depending on the type of project. This was the first time we tried working with Unity so it took some time to familiarize ourselves with the tools and the API.

We decided to download a demo from Unity Asset Store called “Bootcamp”, and modify it to support Oculus Rift. We removed the player controller and changed it with a “ovr player controller”, which was fairly easy to accomplish. After that we thought it could be fun to place a spaceship into the game, so we downloaded a spaceship model and placed it into the project.

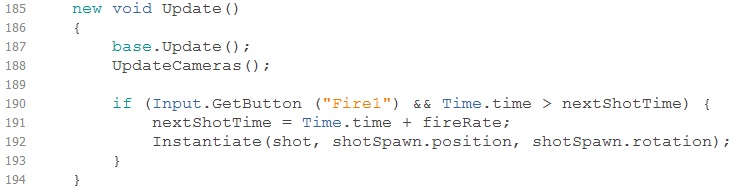

With that done we wanted to try to build something from scratch, so we inserted some terrain and blocked the edges with mountains. Shamelessly using parts of the “Project: Space Shooter” from the Unity’s learn section, we added visible shots using a texture and one of the built in shaders. To give the Rift controller the option of shooting we had to go into the scripting section and perform some minor modifications to the code.

The Update method is called on each active object for every frame generated. Line 190 to line 194 is what we had to do to make a player able to shoot. The nextShotTime, fireRate, shot and shotSpawn are fields we had to add. Only shot and shotSpawn are of any interest, shot is an actual GameObject that should be spawned on line 192. The shotSpawn is an empty GameObject which we linked to the camera, in relative positions. That means that the spawner will follow the camera view, so shots will spawn in the direction a player is looking. The Instantiate method basically just makes a new shot and spawn it at the position of the shotSpawn.

So … In conclusion and to sum it all up: In a period of three days we went from not knowing anything about 3D programming, Virtual Reality, Oculus Rift or Unity, to being able to modify an existing Unity project and make it take full advantage of the immersive experience a Oculus Rift Virtual Reality display unit can offer.